Recommended videos

Recommended videos

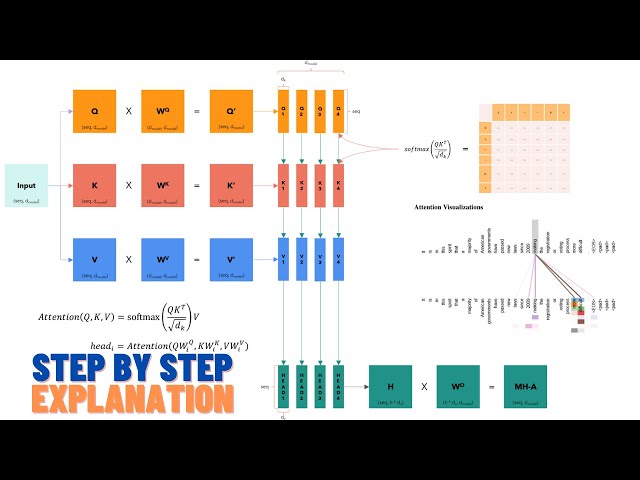

Attention is all you need (Transformer) - Model explanation (including math), Inference and Training

300,362 views

11 months ago

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale (Paper Explained)

324,581 views

3 years ago

10 – Self / cross, hard / soft attention and the Transformer

34,831 views

2 years ago

Cross-Attention in Transformer Architecture Can Merge Images with Text

Vaclav Kosar

608 subscribers

Wed, 23 Mar 2022 00:00:00 GMT

4 Comments