Recommended videos

Recommended videos

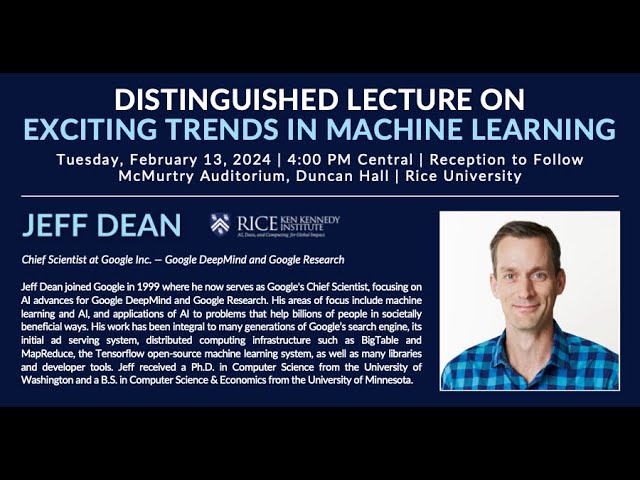

Jeff Dean (Google): Exciting Trends in Machine Learning

164,384 views

2 months ago

Train, Deploy, Evaluate, Repeat: Mastering Custom LLMs (NLP IL @ Dream 8.4.24)

135 views

2 weeks ago

LLM Safeguards - Keeping LLMs in Line

113 views

1 month ago

NLP IL - Vision-Language Club #3 Hila Chefer - Lumiere

NLP IL - Natural Language Processing Israel

486 subscribers

Tue, 05 Mar 2024 00:00:00 GMT

2 Comments